K7 Tech portfolio

Our selected workK7 Tech

Heading

Get to know some of our most interesting projects.

Get to know some of our most interesting projects.

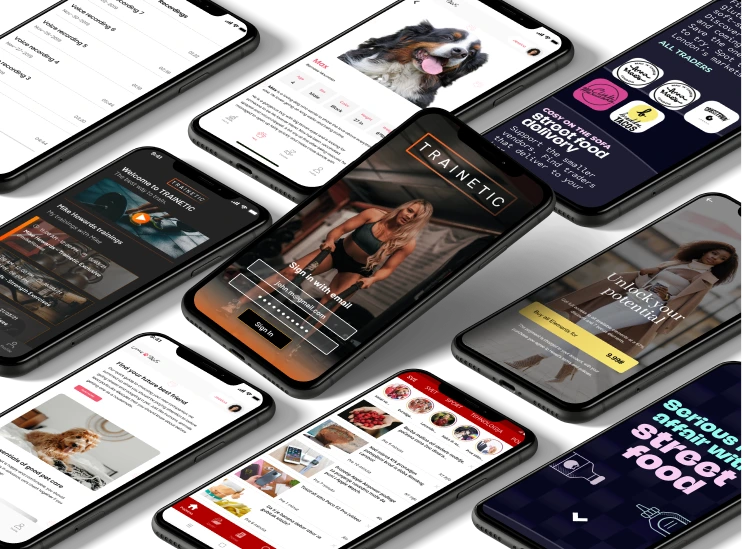

Mobile development

Custom-made mobile apps for Android and iOS devices, built with usability and an enjoyable user experience in mind. We build world-class applications for clients across industries, focusing on impeccable performance above all.

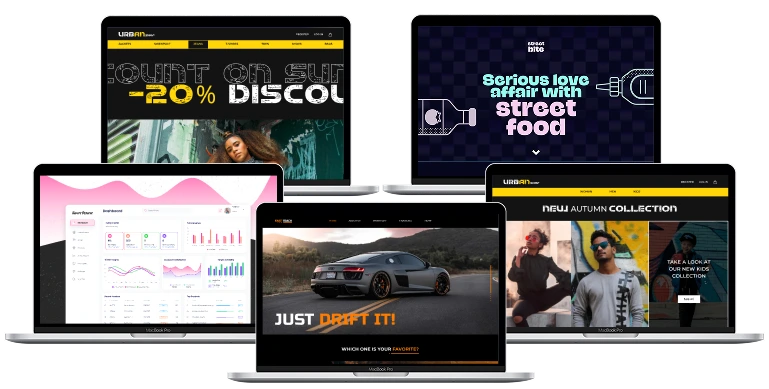

Web Development

Expertly engineered web solutions that suit all your business needs - eye catching interfaces that boost your online visibility and customer-centric web applications to drive business growth.